The news

During the Salesforce AI Day on June 12 as well as the Salesforce AI Industry Analyst Forum on June 20, Salesforce provided a lot of interesting information on how the company addresses the challenge – or should I say problem – of trust into artificial intelligence. Salesforce sees this gap caused by hallucinations, lack of context and data security as well as toxicity and bias. According to Salesforce, this gets compounded by the need for integrating external models into business software.

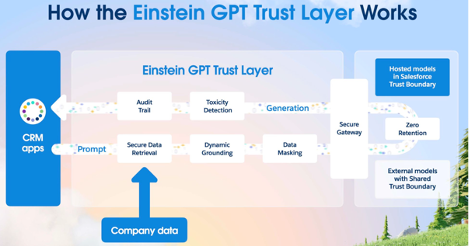

To address this problem, Salesforce has announced its AI Cloud that combines an “Einstein GPT Trust Layer”, Customer 360 and its CRM to offer AI-powered business processes that are built right into the system, based on an AI that can be trusted. The main vehicle is the Einstein GPT Trust Layer that takes care of

- secure data retrieval from business applications,

- dynamic grounding to reduce the risk of hallucinations and to increase response accuracy by automatically enriching prompts with relevant business-owned data,

- data masking, the anonymization of sensitive data to avoid its unintentional exposure of sensitive data to external tools,

- toxicity detection to make sure that generated content adheres to corporate policy, is free of unwanted words or images, and unbiased,

- creating and maintaining an audit trail,

- the external (or internal) AI not retaining, storing, any corporate information that gets sent to it via the request.

This trust layer sits in between the used AI models and the apps and the respective development environments. All requests to the models, along with their data, get routed through this layer, ensuring authorization protected retrieval of data, the grounding of prompts using it as well as data masking for anonymization. Responses by the models get routed through it as well. This enables an audit trail as well as toxicity detection. Models can be ones within Salesforce, ones developed and deployed by the customers in their infrastructure and third party models.

Figure 1 The Salesforce AI Cloud Architecture; source Salesforce

To round this off, Einstein Studio allows the building and deployment of models, their training using data within Salesforce and, at a later stage, the building of own models using a no-code environment.

The bigger picture

Although AI is not new, it is safe to say that generative AI is a game changer. OpenAI managed to get AI out of the realm of data scientists and into the hands of mere mortals. And most of us use business applications on a daily basis.

One of the most daunting problems of the use of AI is that there are a number of considerable risks involved with its usage. The one that is currently talked about most in the context of generative AI is the one of accuracy of responses to prompts, which is often referred to as hallucinations. This is not only problematic in consumer usage but even more so in business usage.

What comes on top in a business context is very much related to data privacy bias and profanity. Both have also been discussed in the consumer arena. Do you remember Microsoft’s infamous Tay bot? Or more recently of Samsung, Amazon, Apple, and other companies ordering their staff to not use ChatGPT et al.?

The management of all of these risks is of paramount importance to businesses, for regulatory reasons as well as for the need of protecting own intellectual property. No business can afford customer data and/or sensitive corporate data leak into external tools. This is doubly true in strongly regulated industries. But how to ensure this, when the models are not fully understood and when it is not even clear where and how data is stored? How to adhere in a GDPR-request to delete a customer’s data in this case? The management of these risks requires organizational, educational, and cultural measures in companies. These need to be supported or enforced with the help of technology.

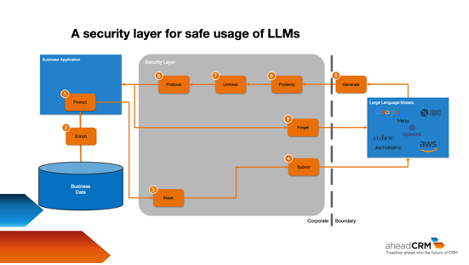

The obvious technical resolution for this is an AI security layer that I outline in my (upcoming, as of this writing) column article on CustomerThink as follows.

Figure 2 An AI security layer; source Thomas Wieberneit

This is of course simplified, not exactly trivial, but possible.

My analysis and point of view

One could, or rather should, say that trust and security are two of the most important assets in business. Customers need to trust that businesses do not collect an inordinate amount of data and that they furthermore use the data given by customers only for consented to purposes. In addition, they need to trust businesses that they keep their data safe. A multitude of regulations mandates this. Being trustworthy is even more important in times of AI as a service, when businesses cannot even tell anymore where customer data is stored, as it is learned by the AI and stored in a very decentralized manner – as part of an unknown number of parameters.

To enable this trustworthiness, what lies closer for a tier one platform vendor than ingraining an AI security layer directly into the own platform? The gateway to external services is already provided by the platform and can be reused by the AI security layer.

This is what Salesforce has done in an exemplary manner with the aptly named Einstein GPT Trust Layer. Kudos for this.

Figure 3 – How the Einstein GPT Trust Layer works; source Salesforce

In my opinion, the most interesting part is the zero-retention portion. Salesforce cannot guarantee on its own that external providers do not store any data. Whenever a prompt is sent to an external vendor, this data is leaving Salesforce’s systems boundaries. This means that external vendors assume temporary control of this data to provide their services. Masked or not, this data that can potentially be demasked, is handled by them.

To accommodate for this, Salesforce has established “zero-retention policies” with these vendors. According to information given during an analyst briefing, these policies ensure that the vendors won’t store any in-flight data, including inputs and outputs, nor won’t they use it for any purposes besides generating a response to the prompt.

This is quite an important statement that also indicates GDPR compliance, if “policy” can be translated to contract. On the other hand, this makes me curious how the refinement of prompts works in this case. Obviously, for highly security-oriented customers, this also suggests the preference of Salesforce or customer-owned models over external ones.

Overall, this is a great offering that addresses important concerns of the C-suite.

The only qualm that I have is the price tag, which is quite steep, starting at currently $360,000 US. For sure, customers can derive good value out of it, this is not the problem. Where I see a challenge is that this is out of reach for most SMBs. I’d love to see an adaptation of this offering combined with offerings like Salesforce Easyor similar.

I wait to see when other vendors come forward with a comparable offering. Especially the other tier one but also the tier two vendors need to make a move now. Salesforce truly let the Genie out of the bottle and put them in a tight spot.

Kudos again!